How to Pilot an AI Scribe in Your Emergency Department: A Step-by-Step Guide

Most ED leaders understand that AI scribes have the potential to reduce charting burden. The real question is whether they will work in your department, with your physicians, and within your operational constraints.

A failed pilot doesn’t just cost money. It erodes physician trust in new technology, creates skepticism among hospital administrators, and delays future adoption by months or even years. In the ED, the stakes are especially high. Documentation downtime is not an option, patient flow is unpredictable, and charting errors lead directly to billing and compliance risks.

Emergency departments face unique conditions that make AI pilots fundamentally different from those in primary care. Clinics operate with scheduled visits and a single-patient focus. ED's juggle simultaneous cases, frequent interruptions, complex presentations, and ongoing pressure to balance speed with billing accuracy. Tools that work well in clinics often fail under emergency department conditions.

This guide provides a practical framework for running a pilot that produces real evidence instead of opinions. You’ll learn how to select the right pilot team, define meaningful success metrics, follow a structured multi-phase rollout, and translate results into a business case that earns buy-in from both physicians and finance leadership.

Why Most ED AI Scribe Pilots Fail

Understanding common failure patterns helps you avoid them.

Wrong Participants

Many hospitals choose only tech-savvy early adopters, which can lead to artificially positive results. A strong pilot includes both champions and skeptics. Champions show what’s possible. Skeptics reveal whether the tool genuinely solves problems or creates new friction. If your skeptic becomes an advocate by the end of the evaluation period, that’s a strong sign of real product-market fit.

No Baseline Metrics

Without clear before-and-after data on charting time, billing levels, etc., it’s impossible to prove ROI. Qualitative data may influence physician perception, but CFOs and CMOs need numbers. Without them, your pilot is reduced to a matter of opinion, not a sound business decision.

Treating it Like a Primary Care Pilot

Primary care is structured around scheduled appointments and a single-patient focus. Emergency departments manage multiple patients at once, handle frequent interruptions, and require documentation that supports complex billing. An AI scribe that performs well in family medicine may fall apart when a physician is managing three cases while responding to a trauma.

No Multi-Patient Management Testing

The real challenge isn’t capturing a routine visit. It’s maintaining quality when a physician is juggling a chest pain workup in room 4, a psychiatric hold in room 7, and an incoming trauma. Many generic AI scribes lack the architecture to handle this because they weren’t designed for emergency medicine.

Ignoring Billing and Compliance Validation

Clinicians assess clinical accuracy. Revenue cycle teams assess billing compliance and audit risk. The most effective tools - clinical copilots, proactively flag missing billing elements and prompt for compliance-critical documentation in real time. If Health Information Management isn’t involved early, billing issues often surface only after full deployment, after it’s too late to fix them.

Pre-Pilot Setup (Preparation Phase)

Success starts before any physician interacts with the AI scribe. The preparation period leading up to deployment determines whether your pilot yields clear, actionable data or inconclusive results.

Build the Right Team

Emergency department AI scribe pilots require cross-functional participation from every group involved in documentation and workflow. A well-rounded team ensures the pilot reflects real-world operations and produces credible outcomes.

Essential team members include:

- Physician champion

- Demonstrates what the tool can do and helps troubleshoot workflow issues with peers.

- Physician skeptic

- Tests real-world viability. Their feedback reflects the concerns of physicians you’ll need to persuade during rollout.

- ED Medical Director

- Offers administrative support and helps navigate organizational politics, especially when it comes to budget approval and EHR integration.

- Revenue cycle or HIM representative

- This role is non-negotiable. Reviews AI-generated notes for billing compliance, uncovers missed revenue opportunities, and ensures documentation aligns with CMS standards.

- IT or EHR liaison

- Addresses technical issues and ensures the scribe functions smoothly within your existing tech stack.

- Nurse manager

- Spots operational friction that physicians might overlook—such as whether AI use affects room turnover or creates complications during documentation handoffs.

Define Success Metrics

Establish your metrics before launch and align the team on what success looks like. A shared definition avoids confusion later and ensures everyone is measuring the same outcomes.

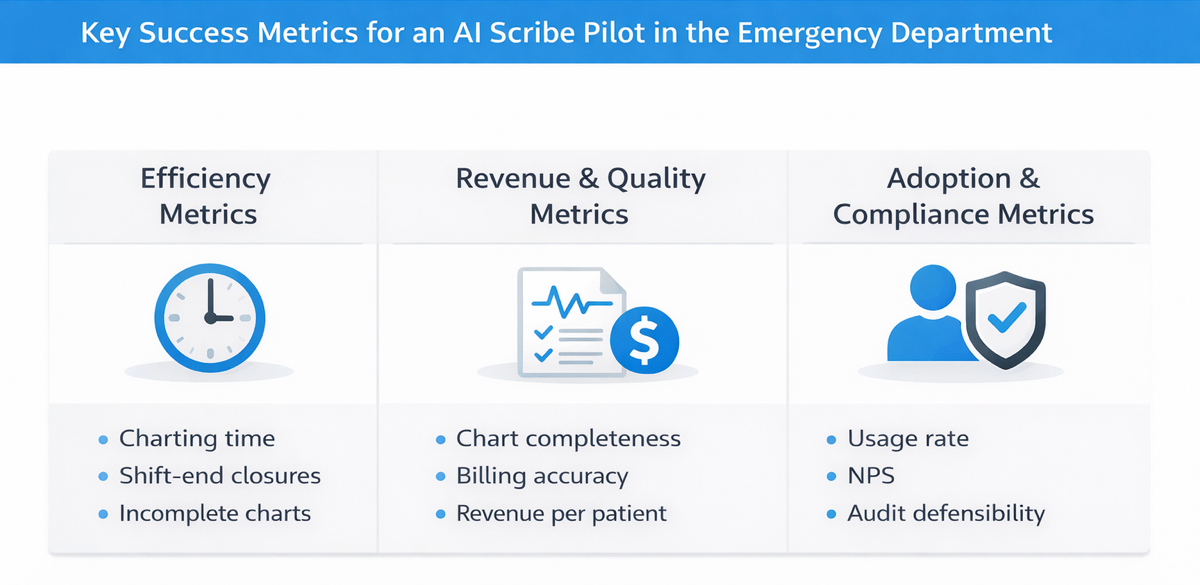

Efficiency Metrics

- Charting time per patient

- Compare pre-pilot and pilot averages. This directly affects physician capacity and potential overtime costs.

- Charts closed before shift end

- Track the percentage improvement. This impacts work-life balance and can influence retention.

- Incomplete charts at shift end

- Monitor the reduction in unfinished charts. This affects billing timelines, coding accuracy, and continuity of care.

Quality and Revenue Metrics

- Chart completeness score

- Rate HPI, ROS, MDM, and procedures on a 1-to-5 scale. Compare 20 to 30 baseline charts with AI-assisted charts.

- Billing level accuracy

- Measure the percentage of charts that meet documentation criteria for the billed E/M level. Proper support for higher-complexity codes translates into recovered revenue.

- Revenue per patient

- Use documented acuity, procedures, and critical care time to calculate revenue. Even a $30 to $40 increase per patient scales to significant annual impact.

Adoption Metrics

- AI activation rate

- Track the percentage of encounters where the AI is used. A consistent usage rate above 80 percent indicates strong workflow fit. A declining rate signals friction.

- Physician satisfaction (NPS)

- Use a simple before-and-after survey: “How likely are you to recommend this tool?” This gives a quantifiable snapshot of clinician experience.

- Patients managed simultaneously

- Compare physician multitasking with and without AI. If clinicians can safely manage more patients without quality loss, overall capacity increases.

Compliance and Risk Metrics

- Chart audit defensibility

- Ask HIM reviewers if documentation would withstand CMS scrutiny. Does the MDM justify the chosen code level?

- Coding query response time

- Complete documentation should reduce physician clarifications, speeding up billing and reducing rework.

- Missed billable elements

- Assess whether the AI captures documentation for procedures, critical care, or high-acuity care that might otherwise be missed.

Choose metrics based on areas where improvement is realistic. If physicians already complete charts in under 10 minutes, targeting massive time reductions may be unproductive. Instead, focus on billing accuracy, revenue capture, or chart completion improvements that can show measurable gains.

Capture Baseline Data

Before introducing the AI scribe, collect two weeks of retrospective data on your pilot physicians to establish a clear baseline.

- Extract key EHR metrics

- Charting time per patient, charts completed during the shift versus after hours, and average time from patient discharge to chart closure.

- Audit recent documentation

- Review 20 to 30 recent charts using the same evaluation criteria you will apply post-implementation. Score for HPI completeness, billing level support, procedure documentation, and quality of medical decision-making.

- Survey participating physicians

- Ask what aspects of charting take the most time, which documentation elements they commonly miss, how often they stay late to finish notes, and what concerns might make them hesitant to adopt an AI scribe.

Without this baseline, your pilot only yields anecdotes. With it, you generate measurable proof of ROI.

Pilot Execution Timeline

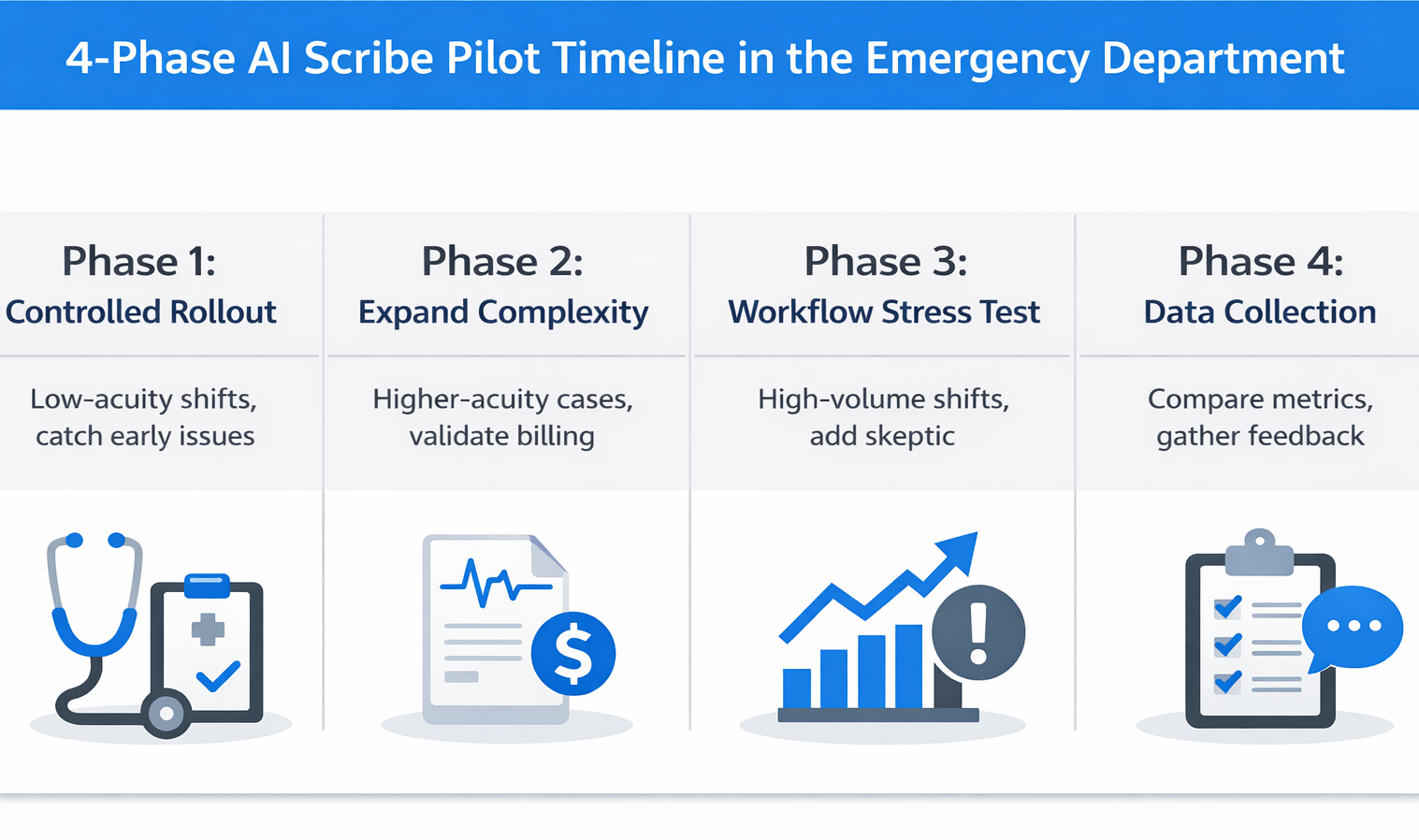

A phased timeline helps you gather meaningful data without overcommitting too early. Most pilots span four to eight weeks, but the pace should fit your team and environment.

Phase 1: Controlled Rollout (Weeks 1–2)

Quick Reference:

- Participants: 2 to 3 physicians working low-acuity day shifts

- Primary Goal: Build confidence and identify major issues early

- Key Actions:

- Test AI accuracy on chief complaint and HPI

- Track manual edits per note

- Verify how the system handles interruptions

- Validate multi-patient tracking (essential for ED workflow)

This week is not about proving ROI. It’s about surfacing deal-breakers before expanding the pilot. If the AI struggles to capture routine documentation accurately without requiring heavy editing, it’s not ready for broader testing.

Focus on edit time per note. Some physician editing is expected—notes should reflect individual voice and clinical nuance—but if clinicians spend 10 minutes editing a note that would take 5 minutes to write manually, the tool is adding work, not reducing it.

Test interruption management. What happens when a physician begins documenting for one patient, is pulled into a trauma room, then returns to finish the original note? In the ED, this happens constantly. A reliable tool should maintain context without introducing errors.

Watch for early warning signs:

- Physicians consistently spending more time editing than writing from scratch

- AI missing critical details, forcing clinicians to start over

- Clinicians abandoning the tool during busy shifts

Phase 2: Expand Complexity (Weeks 3-4)

Quick Reference

- Participants: Same physicians from Week 1, now handling higher-acuity cases

- Primary Goal: Test AI performance on complex cases and validate billing accuracy

- Key Actions:

- Include chief complaints like chest pain, abdominal pain, and trauma

- Test clinical decision support capabilities

- Have Health Information Management (HIM) audit 10 AI-generated charts

- Confirm validation from the revenue cycle team

This week is about testing the AI’s ability to handle complexity. Introduce cases that require layered reasoning and multi-system documentation—chest pain workups, abdominal pain differentials, and trauma evaluations.

Evaluate clinical decision support. Does the AI recognize when to apply the HEART score for chest pain or when the PERC rule is relevant? Can it support multi-system trauma cases with appropriate clinical detail?

Have HIM audit at least 10 AI-generated charts using the same scoring criteria as your baseline review. Look for alignment between the documented care and the billed evaluation and management level. Are procedures captured correctly? Does the medical decision-making section meet CMS documentation standards?

This is the point where financial viability comes into focus. If revenue cycle leaders confirm that AI-generated charts support accurate billing levels and capture previously missed revenue, you have the foundation for a strong business case—one your CFO will understand.

Phase 3: Workflow Stress Test (Suggested Weeks 5–6)

Quick Reference

- Participants: Add the skeptic physician to the group, focus on high-volume shifts

- Primary Goal: Evaluate performance under real emergency department pressure

- Key Actions:

- Run the pilot during weekend and evening surges

- Test simultaneous management of three to four patients

- Observe physician behavior and time spent post-shift

- Collect nurse feedback on any changes in workflow

This is where you find out if the AI can withstand real-world chaos.

Bring in your skeptic physician and shift the pilot to the busiest times—weekends, high-acuity evenings, or peak hours when documentation typically falls behind.

The core test: Can clinicians manage multiple patients at once using AI support while maintaining documentation quality?

Emergency departments operate on nonstop context switching. This is where most generic AI scribes fail. If the tool slows down or introduces errors when things get busy, it will not make it through full deployment.

Monitor charting efficiency. Are physicians still staying late or are they completing notes before their shift ends? Check with nursing staff as well—do they notice smoother handoffs or disruptions? Their perspective helps reveal hidden friction the pilot team may miss.

Phase 4: Data Collection and Feedback (Weeks 7–8)

Quick Reference:

- Focus: Shift from testing to measurement and evaluation

- Key Actions:

- Compare pilot performance against baseline metrics

- Audit 20 AI-generated charts using the same scoring criteria

- Survey physicians for Net Promoter Score and qualitative feedback

- Estimate revenue lift based on billing improvements

- Create a decision brief for leadership

Take all defined metrics—charting time, note completion rates, billing accuracy, physician satisfaction—and compare them directly to your baseline data. Use consistent methodology to audit 20 AI-generated charts for HPI, MDM, procedures, and level of service.

Run a brief survey with your pilot physicians. Capture Net Promoter Score by asking how likely they are to recommend the tool. Include open-ended questions to learn what worked well, what created friction, and whether they would continue using it.

Estimate the revenue impact per physician. If baseline documentation consistently led to level 4 billing and the pilot notes support appropriate level 5 coding, calculate that delta across your average patient volume.

Summarize everything in a concise decision brief. This should highlight operational outcomes, financial impact, physician sentiment, and any identified risks. It is the foundation for a go or no-go decision.

Evaluation Checklist

Use this checklist during your pilot to systematically evaluate what matters for long-term success.

Clinical Accuracy and Workflow Fit

□ Captures complex EM presentations - Multi-system complaints, trauma, high-acuity visits documented without missing critical elements or flattening to generic templates

□ Handles EM clinical decision rules - HEART score, PERC rule, and Ottawa ankle, integrated seamlessly (clinical copilots assist with decision-making, not just documentation)

□ Manages multiple simultaneous patients - Maintains context when switching between 3-4 active patients without errors or forcing physicians to close/restart each encounter

□ Integrates with EHR seamlessly - No extra applications, manual copy-pasting, or workflow-disrupting workarounds

Revenue Cycle Impact

□ Charts meet billed E&M criteria - HIM audit confirms sufficient history, exam, MDM to support billed levels without compliance risk

□ Procedures and critical care documented - Captures laceration repairs, reductions, lines, critical care time (undocumented procedures = $100-300 lost per occurrence)

□ Fewer coding queries - Complete documentation reduces physician clarification requests

□ Revenue per patient improvement - DocAssistant AI's case study showed $399,168 additional annual revenue per physician. Measurable billing improvement validates financial value.

Physician Adoption and Satisfaction

□ Consistent activation rate above 80% - High usage indicates value. If activation drops below 80 percent, investigate workflow friction or feature gaps.

□ Skeptics see value - A successful pilot turns skeptics into advocates. If your skeptic remains unconvinced, dig into the reasons before scaling.

□ Physicians leave on time - If providers are still staying late to edit charts, documentation debt has shifted—not disappeared.

□ Physicians would resist giving it up - When pilots would push to keep the tool post-pilot, you’ve reached real product-market fit.

Compliance and Risk Mitigation

□ Charts audit-defensible for CMS - Medical decision-making supports the billed code. Documentation clearly reflects medical necessity and avoids triggers for downcoding.

□ Copilot flags missed billing elements - Prompts for procedures and critical care when criteria are met. Converts the tool from a passive scribe to an active revenue and compliance assistant.

□ Documentation defends medical necessity - Notes are complete enough to stand up to billing audits and provide legal defensibility.

Build the Business Case

Raw pilot data alone rarely persuades leadership. A well-structured business case, tailored to different stakeholder priorities, is what moves decisions forward.

For the CFO: Lead with Financial Impact

CFOs care about return on investment and margin contribution. If your pilot shows a $42 increase in billing revenue per patient, translate that into an annualized figure. For example, 12 emergency physicians seeing 2,400 patients each would generate approximately $1.2 million in additional revenue per year.

Factor in cost avoidance as well. Reduced overtime, lower reliance on locum coverage, and decreased physician turnover from improved satisfaction all carry meaningful financial value. Replacing one emergency physician can cost between $250,000 and $500,000 in recruitment expenses and lost productivity.

Be transparent about implementation costs—licensing, training, IT integration, and support. If your ROI model shows a payback period under 18 months with realistic cost assumptions, that’s a compelling business case.

You can frame it like this:

“Our pilot demonstrated a 78 percent reduction in charting time and a $42 improvement in billing per patient. For 12 physicians seeing 2,400 patients annually, that represents $1.2 million in new revenue and 720 hours of documentation time saved per physician each year.”

Use your own volume and documentation patterns to calculate specific ROI.

For the CMO: Lead with Quality and Retention

CMOs focus on compliance risk, documentation quality, and physician retention. AI scribing should be positioned as both a quality improvement and a workforce sustainability strategy.

AI-assisted documentation improves chart completeness and billing defensibility. If audit scores for billing level accuracy and medical decision-making quality increase during the pilot, that directly reduces exposure to CMS downcoding and post-payment audits.

Just as important, AI tools that reduce administrative burden help retain physicians in a high-turnover specialty. Emergency medicine continues to face staffing shortages and rising burnout rates. When a tool measurably reduces time spent documenting, it improves physician experience and creates a competitive advantage in recruiting.

You can frame the impact like this:

“Documentation burden is the top burnout driver in our satisfaction surveys. This tool reduces that burden by [X] hours per week while also improving chart quality. Considering that each physician departure costs between $300,000 and $500,000, improving retention through better work-life balance has significant strategic value.”

Include Physician Voices

Numbers persuade finance. Clinician testimonials persuade everyone else.

Highlight direct quotes from pilot participants—especially those who were skeptical at first but changed their stance by the end:

Example quote: “I didn’t think anything could handle our chaos, but this works. I’d resist going back.” – Dr. Smith, previously skeptical

These quotes build trust and signal real-world viability.

Also include Net Promoter Scores (NPS). If every pilot participant said they would recommend the tool and resist returning to baseline, that’s powerful social proof. It shows not just satisfaction—but advocacy.

Avoid These Pitfalls

Testing only easy shifts

Don’t limit the pilot to low-volume day shifts. Deliberately include overnight coverage, weekend surges, and high-acuity scenarios. Tools that only work under ideal conditions will fail under real pressure.

Skipping revenue cycle involvement

Physicians evaluate clinical accuracy. Revenue cycle and HIM evaluate billing compliance. A tool that clinicians love but fails audits will not survive executive review. Bring HIM in from day one.

Assuming all AI scribes are the same

Generic scribes designed for primary care often break down in emergency medicine. Make sure your evaluation includes multi-patient handling, support for EM decision rules, and billing-critical prompts. The cheapest option may cost more if it can’t support your workflow.

Failing to define exit criteria

Set your thresholds upfront: “If charting time doesn’t drop by 40%, or billing scores decline, or fewer than 70% of pilots would recommend it, we stop.” Clear failure criteria prevent sunk-cost thinking and protect trust.

Waiting too long to collect feedback

Hold weekly check-ins. Ask: What’s working? Where’s the friction? What almost made you stop using it? Small issues grow into deal-breakers if left unresolved until week four.

Conclusion

A successful pilot proves three things: physicians become more efficient, billing compliance and revenue improve, and clinicians don’t want to return to the old way of working.

The framework above gives you a structured path to gather that proof. You’ll know whether the tool performs in your ED’s operational reality—and you’ll have the data to justify the investment with hospital leadership.

Emergency departments deserve AI tools purpose-built for their environment. DocAssistant AI was created by an emergency medicine physician specifically for ED workflows: multi-patient management, real-time clinical decision support based on EM guidelines, and features designed to enhance documentation quality and billing defensibility in acute care.

Next step: Schedule a pilot planning session to map your metrics and timeline, or download the ED pilot checklist to start building with this framework.

About the Author

Nathan Murray, M.D. Emergency Medicine - Founder of DocAssistant

Dr. Nathan Murray is an Emergency Medicine trained physician and the founder of DocAssistant. With years of frontline clinical experience, Dr. Murray is passionate about using AI to streamline medical documentation and enhance clinical decision making.